New Tool Predicts Worldwide Heart Disease By Country

Since it was first identified more than a century ago, we still have no cure for Alzheimer’s disease. Among the various avenues that researchers have been working on to try and understand the causes and potential treatments for the disease, the role of blood vessels has been increasingly a focus – and in particular the “blood-brain barrier”, a protective layer of cells that regulates what can enter the brain through the blood.

The brain has a dense network of blood vessels, which if stretched end-to-end would cover more than 5,000 football pitches. However, unlike the blood vessels in other parts of the body, these vessels restrict which things can enter the brain from the blood stream. It does that by forming a physical overlap of cells, such as pericytes and endothelial cells, that make up the blood vessel wall and forms tight junctions that control the entry and removal of substances into and out of the brain.

Hawkes et al. 2014 Brain Pathol. 24:296-403

The blood-brain barrier is usually very tight in young, healthy people. This means that the brain controls what substances get in, which is important for proper brain function. However, this high level of control can be a problem for treating brain diseases because drugs in the bloodstream can be kept out of the brain.

A lot of research is being done to try get around this problem, for example by attaching the drug to a substance that the brain normally takes up like glucose.

Other approaches include attaching the drug to nano-particles that are small enough to pass between the cells of the blood-brain barrier or by directly loosening the barrier using ultrasound. However, this approach needs to be applied very carefully because opening the blood-brain barrier can also allow entry of toxins and other damaging substances into the brain and cause injury.

As well as acting as a gatekeeper for entry of substances into the brain, the blood-brain barrier is important for removing waste products from the brain. One of these products, β-amyloid, is a key player in Alzheimer’s disease.

β-amyloid is made in the brains of everyone. In the healthy brain, it is quickly removed from the brain by moving across the blood-brain barrier into the blood. In the old brain, this movement is slower and allows β-amyloid to build up. And as it builds up, β-amyloid causes damage to both the brain and the blood-brain barrier, which in turn creates further damage by decreasing the removal of β-amyloid from the brain and allowing entry of toxins from the blood.

This on-going cycle of damage is thought to contribute to the development of Alzheimer’s disease.

Because the cells that make up the blood-brain barrier are very small, it is difficult to see changes in the health of the blood-brain barrier in a living person. But recent advances in brain imaging have helped to solve this problem.

A research group at the University of Southern California have used a new form of magnetic resonance imaging (MRI) to measure blood-brain barrier breakdown in the brains of young and old people and people with mild cognitive impairment. These people don’t have overt dementia, but are at high risk of developing Alzheimer’s disease.

The group found that the tightness of the blood-brain barrier decreases naturally with age, and that the blood-brain barrier was “leakier” in old people with mild cognitive impairment, compared to old people who were cognitively normal. These findings suggest that the function of the blood-brain barrier declines with age and supports the belief that loss of blood-brain barrier integrity plays a role in the development of Alzheimer’s disease.

More and more work is pointing to a role for blood vessels and the blood-brain barrier in the initiation of Alzheimer’s disease dementia that could form the basis for new therapies for the prevention and treatment of Alzheimer’s disease. Although there is some promising work suggesting that increasing the levels of proteins that transport β-amyloid across the blood-brain barrier may help to decrease its accumulation, much work still remains in the development of Alzheimer’s disease therapies that target this barrier.

![]()

This article was originally published on The Conversation.

Read the original article.

Unexpected results are sort of the point of lab experiments. Laboratory studies reveal the unforeseen and if they didn’t, there would seldom be a reason to perform lab studies. It can be problematic when scientists don’t get the results they wanted or thought to expect but other times new data can be the result of the unexpected, and lead to discoveries no one thought to check for in the beginning. Some famous discoveries happened on total accident throughout scientific history. The latest unintentional discovery might make one of the most aggressive types of cancer more treatable than ever before.

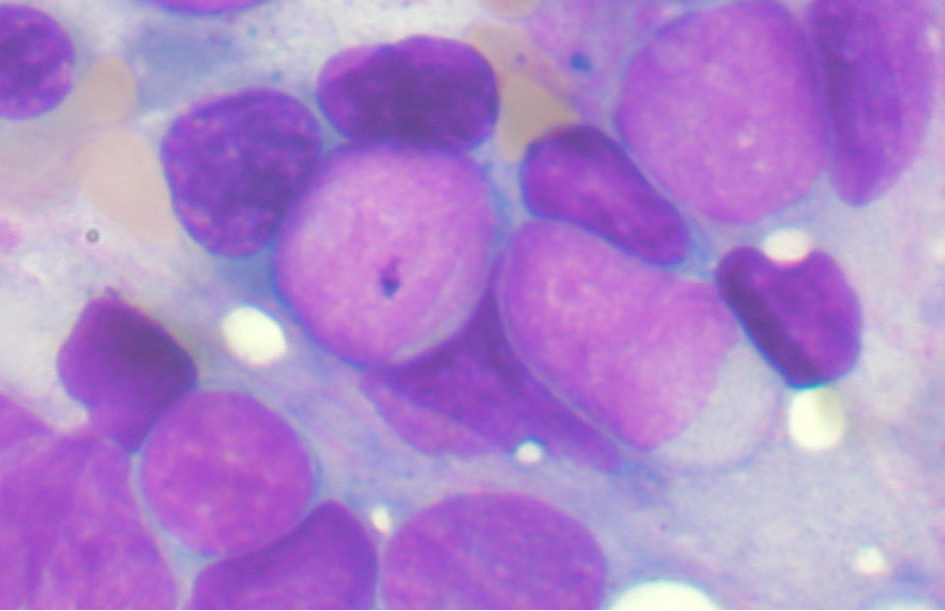

Scientists at Stanford recently discovered a way to force leukemia cells to become mature immune cells do something amazing. The researchers were actually trying to stabilize cancer cells so they could keep them alive longer in order to study them. The method of keeping the cells alive allowed the cells to develop into immune cells that may one day help the immune system attack cancerous tumor cells!

You can read the study in full at Proceedings of the National Academy of Sciences.

Acute lymphocytic leukemia (ALL) is the name for a particularly rapidly-progressing cancer where the immature cells that should differentiate and become white blood cells or lymphocytes instead become cancerous. ALL has several classifications based on which kind of lymphocyte (B cell or T cell) the mutated cancer cell originated from.

The scientists were simply investigating a common type of lymphoblastic leukemia, an acute cancer called precursor B cell ALL, aka B-ALL. B-ALL starts as a rogue B cell mutating away from usefulness during an early part of its maturation. The immature cells can’t fully differentiate and become the B cells they were otherwise destined to be. The flawed B cells lack the transcription factors required for normal development. Transcription factors are basically proteins that attach themselves to sections of DNA and are then supposed to switch designated genes on or off, depending on the type of transcription factor. Did you follow that? It’s a bit technical for the layman but most of us understand DNA. Transcription factors are basically a DNA reader than helps the cell decide which part of your DNA it should use to become a specific type of cell.

So, when a transcription factor messes up and activates the wrong section of DNA or doesn’t activate the correct section, it can cause mutations where the cell doesn’t develop or develops poorly. B-ALL is one of the most nasty types of cancer and the prognosis for victims is not good. The Stanford U team wanted to study this villain but had trouble keeping the cancer cells alive outside of the victims body.

Lead researcher Ravi Majeti reported in the lab’s news release: “We were throwing everything at the cells to help them survive.”

One of the techniques they used to attempt to keep the cancer cells from dying involved exposure to a certain transcription factor. The exposed cells began to grow and change shape, and the new morphology was a type of white blood cell called a macrophage, normally responsible for attacking damaged, mutated cells or foreign material.

The team recognized the cancerous cells behaved the same as macrophages in various ways such as surrounding and engulfing bacteria. Most notably, the pseudomacrophages from the cancer cells of mice added back into the cancerous mouse did not behave as a cancer cell, and the mice who did not have cancer did not develop cancer after being exposed.

The Stanford researchers believe the newly converted cells are no longer cancerous. Furthermore, they might even help the body’s immune system regroup and attack other, still cancerous cells. It could work because macrophages normally collect DNA tags from abnormal cells they encounter and also mark foreign material so that other cells in the immune system know what to attack. Since the false macrophages were originally cancerous cells, they will, in theory, already possess the correct signals that recognize the same kind of cancer.

Now that this principle has been identified as a possible method of treating one cancer, it might open the door to helping the immune system combat other cancers.

Related Cosmoso article: Pre-Darwinian Theory of Heredity Wasn’t Too Far Off

|

Jonathan Howard

Jonathan is a freelance writer living in Brooklyn, NY |

OF MICE AND DIRT

At this very moment, there is a mouse who although appears a bit dogged and raggedy is actually quite animated and bears a wild spirit as he sniffs, scrambles, and snoops about his cage at the University of Texas Health Science Center at San Antonio. Though next to his younger siblings he appears on the runtier end of the litter, unlike them he boasts a coat of black fur lustrous enough to land him a spot on a Pantene commercial. Our said mouse’s name is Mouse UT2598 and he is 3 years old, which translates into the centennial years when it comes to a human life span, as mice typically live to around 4 years old, if they are lucky.

For the sake of this article, let’s call this mouse Mighty. So, what gives Mighty his punk-ass edge? It’s called rapamycin, a compound which is gradually revealing to scientists its ability to slow the aging process along with the havoc it can cause on certain cells. Mighty’s heart and liver are functioning as if he were an adolescent, and his tendons are far more resilient with the elasticity of a 3 year-old mouse. Evidence also reveals that his organs are damaged markedly less than is considered standard at his age, leading to the theory that Mighty may be spared the effects of cancer for quite some time longer than mice not involved in the experiments taking place at the University of Texas. In fact, place him in a line-up alongside other mice his age and the distinctions are absolutely extraordinary.

The experiment involving Mighty and rapamycin is just one of many when it comes to investigation into the aging process. Research all over the globe entails experiments with a plethora of agents, not just rapamycin, some of which are already being used to treat a number of human conditions. Scientists are assembling the puzzle by employing tactics like manipulating genes, too. Essentially, they are all in a race for the big cheese: finding ways to extend longevity and ultimately trap the aging process and curb – if not cure (or even reverse) – it altogether.

As it stands, aging is the single most potent ingredient when it comes to recipes needed for age-related diseases that eventually shorten our lives like cancer, heart conditions, Alzheimer’s and other degenerative brain ailments, and others. While the main components typically associated with heart disease are high cholesterol, obesity and high blood pressure, simply celebrating an 80th birthday can be the factor that tips the scale towards contracting a fatal heart condition – even if that 80 year-old resisted a single nibble of his birthday cake. That being said, scientists are beginning to treat aging as a disease in and of itself, one which may be sensitive to treatment as simple as a doctor’s prescription.

Let’s get back to Mighty and his happy pill. Rapamycin has its origin in dirt. That is, soil samples which were collected in 1964 on a voyage to Easter Island eventually became the foundation for developing the antibiotic, and researchers have found that mice who were given the drug were prone to longer lives (about 20% longer) than mice who did not ingest the medication. What makes rapamycin particularly interesting is that it functions in a variety of species including yeast, worms and flies – and it works even when started late in life. Thus, if studies involving the drug are successful and actually lead to human treatment, it could potentially offer benefits to those starting it in their 60s or even 80s.

HOW DOES RAPAMYCIN WORK?

Rapamycin essentially hinders the operation of a gene that both people and mice possess. It is a gene called mTOR which gives the “Okay To Go” to cells for absorbing and expending energy. In the case that there is an abundance of cell-chow, mTOR gets busy directing cells to take in nutrients and start growing. When food is in short supply, the gene retracts as a turtle would into its shell until the next opportunity to feast arises. Scientists have discovered that when cells are active and “eating”, they age substantially, chiefly due to the fact that they are working their cell-butts off to process food while belching out toxic free radicals at the same time. Thus, the mission is to metaphorically slip that cell a “mickey” and keep it mellowed out without putting it at risk of starving itself – and that’s just what rapamycin is allegedly capable of doing. But – and there’s always a but when it comes to this sort of thing – rapamycin is far from being the long-searched-for fountain of youth. In studies like that starring our friend Mighty, rapamycin has resulted in a body that is 30% smaller than average while mTor-manipulated mice developed cataracts and proved more susceptible to diabetes. The study also showed that males are inclined to progressively lose testicular functioning – not exactly a perk when it comes to a pill that may help you live longer.

Another disadvantage of rapamycin was revealed when humans who were administered the drug after kidney transplants in order to decrease the risk of rejection showed signs of higher susceptibility to diabetes. Despite this, scientists feel positive when it comes to their abilities to whip up a tantalizing cocktail when it comes to adjusting doses and arriving at the ideal blend to increase the pros when it comes to longevity rather than the cons of any possible risks.

GENETIC SOLUTIONS – AND COMPLICATIONS

For some researchers, the passcode for achieving longevity lies in our genes, particularly when it comes to telomeres which are essentially timekeepers of a cell’s life. When a cell divides, it automatically xeroxes its chromosomes’ DNA and telomeres carry the responsibility of cueing when to halt that copying process. Every cell division triggers squiggles of DNA to essentially tie themselves into a knot at the end of a thread (or chromosome) and eventually vanish for good. Certain factors can cause telomeres to shorten cell lives at different rates (like exposure to UV light), making them a perfect target for further research regarding the scavenger hunt for anti-aging formulas.

Healthy human bodies typically maintain a perfect waltz between telomeres and telomerase (an enzyme that handles the lengthening or shortening of DNA strands) so that telomerase can lengthens telomeres just enough that an ideal amount of lost DNA can be retrieved back. But in individuals with telomere-syndrome, ailments like bone problems, liver failure and immune disorders can occur. Discovering how to tame malfunctioning telomeres can lead to correcting their misbehavior and coming up with aging-combatant formulas.

Just over a decade ago, a scientist and her collaborator, Dr. Mary Armanios and Carol Greider (the co-discoverer of telomerase) respectively, worked together in a lab at Johns Hopkins University and met a student with a blood disorder that necessitated blood transfusions on the regular. The boy was in his 20s and already had a full head of startlingly gray hair, signs of which began showing when he was only 9 years old. Curiouser and curiouser, Armanios and Greider learned that all of his paternal relatives had died at staggeringly young ages including his grandmother who died of osteoporosis in her 60s, his father who died at 59 while waiting for a liver transplant, and his aunt and uncle who both died of pneumonia in their 60s. What’s more, the boy himself had suffered from various infections that landed him in the hospital multiple times a year – and he eventually died of a staph infection at 31. It turned out that all of the family members had a severe form of telomere malfunction known as dyskeratosis congenita.

To date, Armanio feels strongly that she will learn a lot by tinkering with telomeres, including how they can be engineered to halt aging-related ailments. Similar interest lingers at Stanford University where Helen Blau and her co-researchers have splashed cells with the precise amount of genetic matter to encourage the lengthening of telomeres. In her study, cells ceased to divide indefinitely and on the contrary started to deteriorate at a normal pace, sparking hope in Blau and her team that the cells might be used for testing in the bodies of people with dyskeratosis congenita. If the cells can target the abnormally rapid growing cells entailed in the disease, they might also be used to create techniques for turning back time on normal human aging cells.

SIAMESE-MICE (YES, YOU READ THAT CORRECTLY)

There exists promises of age-defiance in another quite odd experiment taking place at the Harvard Stem Cell Institute. There, researchers have pinned their hopes on the cousins of our friend Mighty – but in a very unusual way. To conduct their work, scientists conjoin two mice, Siamese-twin style, so that they share the same blood system. Via this most bizarre procedure, it’s been discovered that among of a pair of harnessed mice, the older one presents more new nerve-cell growth in their brains than the younger one. The elders were also brawnier, and boasted a reversal of the heart-enlargening process that typically goes hand-in-hand with aging.

The mysterious component that appears to be the cause of these findings is a protein called GD11 which is normally aplenty in young animal blood and meager in older blood. Naturally, the team at Stanford is clawing away at this amazing discovery in hopes of unearthing an answer to the question of whether or not people who live longer have higher levels of GD11, or whether people with little amounts of the protein prove more liable to develop age-related diseases like heart complication, muscle atrophy and cognitive deterioration.

DOES LONGEVITY HAVE ITS ROOTS TO GREEK MYTH?

At the University of California, San Francisco, another experiment is taking place under the auspices of neurobiologist Dena Dubai and involves a hormone called called klotho. Klotho received its nomenclature after the Greek fate responsible for spinning the thread of life for mortals. Klotho is known for extending the lives of animals by 30% when in increased levels. Approximately 1 in 5 humans carry enough of the protein to tack on an extra 3 or 4 years to their lives and although it is not a passport to the land of immortality, it is certainly a bigfoot-sized step towards that mystical destination.

MORE BIRTHDAYS ON THE HORIZON

On that note, it is important to keep in mind that most – if not all – research is not fixed on the objective of creating neverending lifetimes, rather extending healthy lives a bit longer. While toying around with components like telomeres, klotho, and GD11 offer promising results, it is not as easy as simply whipping up the perfect recipe and stocking up Rite-Aid shelves. Further manipulation of longevity genes could involve some big league moves like gene therapy and cell transplants and of course first solving all of the questions that pop up with each answer a scientist reaches. While these riddles are the culprit for bringing researchers back to the drawing board again and again, the general outlook for battling the effects of aging is remarkably upbeat. Scientists seem to bear a spirited momentum certain to bring about some serious headway in the not-too-distant future.

Cystic fibrosis (CF) has proven an enigma for medical researchers and professionals – and patients – for years. There is still a lack of knowledge when it comes to the exact cause of the disease. Despite the missing puzzle pieces however, new medical treatments and courses for controlling the disease and enhancing the quality of life for those living with the illness come in great strides year after year while the prognosis for patients takes consistent turns for the better. Following in the trend of some trailblazing work is a study currently taking place at Aston University in the UK. The study’s researchers are referring to their work as potentially life-prolonging for people with the CF.

WHAT IS CYSTIC FIBROSIS?

Cystic fibrosis is a life threatening, more often than not fatal genetic disease that affects primarily the respiratory and digestive systems, and other bodily systems, depending upon the patient. “CFers” possess a malfunctioning gene that creates a protein which causes the body to produce an atypically thick and sticky mucous. This mucous obstructs the lungs and airways which leads to a lifetime of lung infections, and plugs up the pancreas so that natural enzymes which aid in the breaking down of food cannot be produced. Consequently, vital nutrients cannot be absorbed leading to severe malnourishment and in many cases either lifetime dependence on feeding tubes, or death.

A LOOK AT THE STUDY

Led by Dr. Lindsay Marshall, scientists at Aston University are investigating under the premise that preventing lung infections during childhood can fend off life-threatening infections later in the lives of those with cystic fibrosis. The study entails proving the theory that bacteria pinpointed in children with the illness can disable their natural defense mechanisms, making these children more susceptible to attracting virulent bacteria that can infect airways.

One of the nasty milestones of the disease when it comes to the ravaging of a CF-infected body is the development of a deadly bacterial strain known as Pseudomonas aeruginosa (P. aeruginosa). This specific strain is notorious for being the almost-impossible to treat “superbug” that ultimately creates enough lung damage to kill a CFer. In order to determine the extent to which P. aeruginosa can be halted in its steps, Dr. Marshall and her team have created an impeccable replica of a human CF airway, made completely of human cells in order to examine the treatment of early childhood infections with a spectrum of antibiotics. The model can mimic the functions of an actual human airway and show the deadly progression of cystic fibrosis.

Observing the deterioration process will help Marshall and her crew learn information that can prove critical to developing new and revamped treatments that can actually prolong the lives of people with the illness and carry the CF community in great leaps towards a cure. Marshall claims that the project will allow for establishment of just how accurately their layered human cell prototype can be used to assess its extent for studying the body’s natural defenses and how they are affected by a whole range of inflammatory and infectious conditions – which can lead to the development and assessment of the effectiveness of new and enhanced treatments for CF and other diseases in the future.

ANOTHER DIMENSION TO THE STUDY

With financial support of the a Human Research Trust grant, Marshall’s study is able to take on another objective: that of coming up with new experimental techniques for decreasing the number of animals used to perform respiratory studies. According to an article written in the online magazine Science Daily, last year in the UK more than 115,000 animals were involved in studies for analyzing respiratory conditions like smoke-related lung diseases and asthma. However, since animals do not naturally have CF, it is not only an inaccurate avenue for testing, but quite expensive to genetically alter them for having the disease and is certainly not as effective as using a human model that can be manipulated to have actual CF. In the article, Dr. Marshall states:

“We simply cannot use animals to model the decline in lung function seen in people with CF, the infections typical of people with CF or the administration and dosage of drugs required to treat the condition. Our human CF model…is extremely representative of what happens in human airways and is both ethically and scientifically an improvement upon current animal models.”

THE GOOD NEWS

Cystic Fibrosis is amongst the most common life-threatening inherited diseases in the United States, affecting an approximate 30,000 children and adults in the country and 70,000 worldwide. To demonstrate the benefit of the research when it comes to cystic fibrosis including the aggressive project underway at Aston University, it is important to note that in the 1950s, the disease was strictly a pediatric disease and most children did not live long enough to even attend elementary school. Today people living with the disease can look forward to living well into their 30s, 40s and beyond. Indeed, courtesy of the development of new treatments and therapies, today the average life expectancy of people with cystic fibrosis has risen to 41 years of age.

Most of us can recall sitting inside a hot, stuffy classroom reading countless chapters of history. History had to be studied so that we learned from our mistakes in the past, so we could better the future of our society. However, that theory didn’t make history any more interesting for the majority of us. But, history did it have its interesting points; you know those events that caused everybody to wake up and listen in awe as the chapter was discussed. The Bubonic Plague was one of those events that grabbed everybody’s attention.

As students, we learned that the Bubonic Plague, also known as Black Death, hit Europe sometime during the middle of the 14th century. As many of you can remember, the Bubonic Plague was called Black Death because it killed hundreds of people each time it hit. The first outbreak can be traced back to 1347, but over the following 400 years outbreaks occurred several times. By the time, all was said and done millions of people had died as a result.

Now nobody is debating the fact that the Bubonic Plague killed millions of people, but what they are debating is if it was caused by rats or gerbils. Scientists have always thought that black rats were responsible for bringing the Bubonic Plague to Europe from Asia, where it first originated. Scientists also believed that it was the fleas jumping from the infected rats to humans that continued to lead to further outbreaks. However, Professor Stenseth and a handful of his colleagues do not feel like rats are to blame.

In order to blame rats for the outbreak the climate conditions needed to be ideal for rat-driven outbreaks. According to Professor Stenseth, “For this, you would need warm summers, with no too much precipitation. Dry but not too dry.” To prove the conditions he looked at tree-ring records from Europe during the outbreak time period. What he and his colleagues discovered was that the weather conditions did not support the rat driven outbreak theory, there was no relationship found between the weather and plague conditions. So, now armed with this new information, scientists believe that the Bubonic Plague can be traced back to gerbils from Asia rather than rats. You can read more on the professor’s study in Proceedings of the National Academy of Sciences.

By Boris Schmid, University of Oslo and Nils Christian Stenseth, University of Oslo

The Black Death struck Europe in 1347, killing 30-50% of the European population in six violent years. It wasn’t a one-off epidemic: it signalled the start of the second plague pandemic in Europe that lasted for hundreds of years and only slowly disappeared from the continent after the Great Plague of London in 1665-1666.

These outbreaks were traditionally thought to be caused by rodent reservoirs of infected rats lurking in Europe’s cities, or potentially by rodent reservoirs in the wilderness. But our research, published in the journal PNAS, suggests otherwise.

If the “reservoir” thesis were correct, we would expect plague outbreaks to be associated with local climate fluctuations, through changes in agricultural yields and primary productions in forests, affecting the number of urban and wildlife rodents, resulting in more plague. We found that Europe’s plague outbreaks were indeed associated with climate fluctuations – but in Asia.

The Black Death came to Europe from Asia. Historical records tentatively map it back to outbreaks in 1345 in Astrakhan and Sarai, two trade centres located on the Volga river near the Caspian Sea.

Where the Black Death came from before it hit those cities is not known, but by recovering fragments of DNA from the teeth of plague victims in Europe, the closest currently known living relatives of this medieval strain of the plague causing bacteria Yersinia pestis are circulating in marmots and long-tailed ground squirrels in north-west China.

W. Ryan Easterday, CC BY-NC-SA

Some dominant narratives on the plague are poorly substantiated. One being that medieval plague was transmitted by black or brown rats and their infected fleas jumping to humans. This was indeed how the third plague pandemic in the 19th and 20th centuries was transmitted – but there is poor archaeological evidence there were many rats across much of northern Europe in the Middle Ages aside from small populations of black rats in harbour towns, and no historic records that rats played a role in the disease.

“Rodent reservoirs” represent another dominant narrative. The idea is that the disease was introduced in medieval Europe once (the Black Death epidemic) after which it settled into local rats or wildlife rodents, and continued to cause outbreaks in European cities for hundreds of years.

Schmid et al / PNAS, CC BY-NC-SA

This is the narrative we aimed to substantiate through evidence, but which we ended up challenging. Using tree-ring based climate records from Europe and Asia, we showed that plague reintroductions into European harbours were associated with periods of wet conditions, followed by a drought, across large parts of Central Asia.

These conditions were tough for rodents in the region, traditionally the hosts of the plague virus, and their numbers would plummet. Infected fleas would seek new hosts, often latching onto passing human traders or their camels, though we don’t yet know exactly how the plague made the journey westward. What we do know is that, 14-16 years after the rodent-killing drought, we would often find plague reintroduced into Europe.

The chart below shows these climate fluctuations in Central Asia preceded the Black Death in 1347, the Italian plague of 1629, and the Great Plague of Marseille a century later, but notably not the London plague of 1665 or the outbreak in Vienna the following decade.

Schmid et al / PNAS, CC BY-NC-SA

This followed a pattern that we associate with current-day plague outbreaks. What is the implication of such a finding? In terms of our understanding of the past plague pandemics, it provides a different perspective as to how the disease moved across Eurasia, driven by climate events that were and still are frequently occurring.

It implies that there might never have been permanent reservoirs of plague among European rodents. While alpine marmots might have been affected and transmitted plague in medieval Europe, we found no indications that they can form a long-term reservoir, as their cousins in Asia do.

Furthermore, the observation that plague disappeared from the European mainland, while outbreaks in the Middle East and northern Africa continued to follow upon climate events in Central Asia strongly suggests that the reason why plague disappeared from Europe should be phrased not in terms of why its reservoirs disappeared, but why the disease could no longer spread efficiently across the continent. It gives historians, epidemiologists and biologists new questions to ask in their quest to reconstruct what exactly happened during one of the most devastating pandemics in human history.

![]()

This article was originally published on The Conversation.

Read the original article.

For over one third of a century, the AIDS pandemic has been a dismal specter for much of the world, claiming at least 22 million lives – perhaps a great deal more before it was formally classified in 1982. Another 36.1 million people are forced to live with stages of the disease on a daily basis. At the beginning of the twenty-first century, experts suspected that advances in anti-retroviral and genetic therapy, such as a synthetic protein that signals the virus to destroy its own molecules, might lead to eradication of the disease by 2050. For the first time in many years, the goal of eradication now seems like it might be somewhere within reason, as scientists at the Scripps Research Institute have announced the development of a new vaccine.

HIV, the virus responsible for AIDS, has proven malleable enough to withstand over 30 years of research. While the majority of vaccines in existence rely on weakened viruses that a patient’s immune system uses to build a defense, even a weaker format of HIV-1 could potentially replicate itself. A traditional vaccine against HIV could potentially cause the disease it was designed to prevent. The effort involved researchers from over a dozen institutions and backed by the National Institute of Allergy and Infectious Diseases. They published their research online Wednesday in the journal Nature, ahead of the print edition.

HIV-1 has a less virulent cousin HIV-2, rare outside of Africa, which doesn’t always lead to AIDS. The new vaccine however has been proven effective in blocking both as well as SIV (simian immunodeficiency virus), a monkey-borne illness that is the closest link between species for the virus. The vaccine was even tried against higher concentrations of the viruses than occur in human or primate hosts, and has been shown to be effective for at least eight months following an injection.

“Our compound is the broadest and most potent entry inhibitor described so far,” said Michael Farzan, a professor at Scripps Research and one of the effort’s leaders. “Unlike antibodies, which fail to neutralize a large fraction of HIV-1 strains, our protein has been effective against all strains tested, raising the possibility it could offer an effective HIV vaccine alternative.”

Rather than provoke the body into developing antibodies, the new vaccine stimulates the muscle into developing a new type of protein which blocks viruses from entering cells. They act like antibodies, only with heads and tails that act as spikes against the viral receptors.

When HIV enters the body, it specifically targets the CD4 lymphocyte, a type of T cell which is pivotal for the body’s immune system. After successfully attaching to the cell, each virus then shares its own genetic material — a ribbon of single-stranded RNA which interacts with the cell’s DNA and turns it into an assembly plant for HIV.

The new study is actually the latest in a long line of discoveries made by Farzan’s laboratory, one of which revealed the presence of a co-receptor on human cells known as CCR5, which contains a specific weakness that allow it to act as a gateway for the virus. The placement of a protein over this gateway could potentially avert any infections from HIV.

In response, Farzan’s team created a protein which targets two sites on the virus’ surface, both at the same time, which prevents it from using the cell to replicate.

According to research associate Matthew Gardner, the study has also shed light on why human antibodies are generally useless against the virus and allow the infection to spread: “When antibodies try to mimic the receptor, they touch a lot of other parts of the viral envelope that HIV can change with ease. We’ve developed a direct mimic of the receptors without providing many avenues that the virus can use to escape, so we catch every virus thus far.”

In order to conduct the study, the team “built” a virus of their own, a very small adeno-associated virus that interacts with cells in a similar way to HIV, but harmless and causing no disease symptoms to the patients who were injected with it. Once the patients were injected with the trial vaccine, their cells quickly began to replicate the head and tail proteins, manufacturing enough of them to last for potentially decades, according to Farzan in a press release. The new candidate binds more effectively to the virus than most biological antibodies, and was equally effective at preventing SIV in the macaques who were also modeled in the study. Farzan’s work on the CCR5 gateway was largely ignored, but he hopes that this new vaccine will help others realize the importance of stopping infections at the gate.

|

James Sullivan

James Sullivan is the assistant editor of Brain World Magazine and a contributor to Truth Is Cool and OMNI Reboot. He can usually be found on TVTropes or RationalWiki when not exploiting life and science stories for another blog article. |